When AI and Machine Learning come to the forests

A big thank you to everyone who joined us last weekend for a lively and interesting discussion on data engineering and how to build prototypes that access satellite imagery using Google Earth Engine and Python. It’s always fun to talk about satellites, imagery and how to get things to work in many different clean technology sectors - agriculture, water, energy, climate and disaster management among them.

Today, let’s talk about one sector that doesn’t get as much attention - forestry.

If you heard the the words forests and satellite imagery in one sentence, what comes to your mind? Deforestation? Reforestation? Wildfires? All three?

Managing our forests sustainably is key to protecting the environment in so many different ways - forests have a huge impact on climate, on ecosystem services and on the livelihoods of communities that rely on them. However, the challenge is that most forests are hard to access and data is often difficult to verify on the ground.

But that’s where satellite imagery, artificial intelligence and data science in general shine.

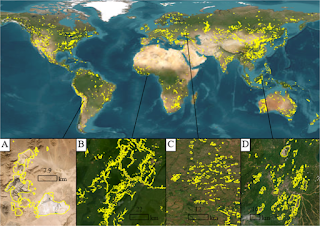

One of the most common applications of satellite imagery from a conservation standpoint is understanding how forests have changed over the years - in area covered, in the type of plant, animal and insect species there, and in how they are responding to changes in climate and the environment.

In the 1970s and 80s, there was a lot of work done in the research community on figuring out how to understand what the satellite sees, how to translate that to parameters that are relevant in forestry and finally how to verify the results on the ground. There were many papers published on classification methods - techniques by which wavelengths and pixel data could be converted into determining what was there on the land. There were models where data from multiple satellites were combined to generate a better picture of land use. And, in doing so, methods on how errors and uncertainties get propagated, how data at different spatial and temporal resolutions can be combined and how much verification at the ground was necessary for the model results to be accurate - all of these came thick and fast.

Other applications that looked interesting were using satellite imagery and machine learning to monitor forest health, be able to better target when and where forests could be harvested for timber and other forest products, and to identify wildlife and other species in forests. While some of these applications had commercial value, they didn’t seem to be viable during the 80s-90s.

This was the initial period where neural networks and other machine learning tools were being developed and used. Of course, these tools were still in their infancy at that time - but the applications were quite clear - as was the fact that they would sometime be quite valuable commercially, if certain roadblocks could be overcome.

Those roadblocks included improving computational speed, access to high-quality, large-scale data, easy and low-cost data storage and access capabilities as well as better validation on the ground, improved integration of multiple data sources and better understanding of processes occurring in forests.

These days, many of the pain points related to computational speed, data storage and access have been solved - with all the high performance computing resources available at relatively low prices from Amazon, Google, Microsoft and the other tech titans. In fact, if you access Google’s Earth Engine website, you’ll notice that many of the applications that they mention involve monitoring deforestation, helping communities identify protected areas, watching for wildfires and more forestry and conservation related stuff…

The challenge now is to work on things like verification on the ground (ground-truthing the data), integrating forest processes with remote sensing data, removing biases in models so that contributions from indigenous communities to forest health are not overlooked or disregarded and figuring out ways by which we can all work together in managing both forest health and the economic health of the communities that depend on them.

This means that many of us who work in agro-forestry, conservation and ecosystems need to become familiar with many of the latest tools in machine learning, data science and satellite imagery access in order to build better models and management systems. That usually means catching up on Google Earth Engine, Microsoft’s AI for Earth initiatives, AWS servers and how to set them up, and let’s not forget Python - with all the powerful machine learning and data visualization libraries.