Robots in Clean Technology: Navigating Oceans and Atmosphere Efficiently With AI and Biomimicry

If there’s one area in AI that’s seen tremendous growth in the last three years, it’s the use of robots, drones and satellites to collect data and perform tasks.

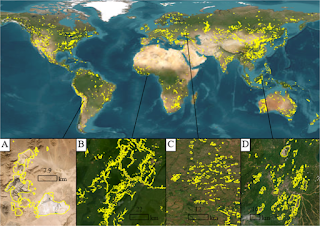

Satellite data was used in the early 1970s for applications in clean technology through machine learning and statistics. Back then, LandSat were the earliest satellites and the data were used to understand land use and monitor environmental impacts from space. Of course, today we’ve got a wide range of satellite data available for all kinds of applications - from traditional government sources like the LandSat and Copernicus satellites to commercial satellites launched by companies like Planet.

Similarly, we’ve seen an explosion in the use of robots and drones in different clean technology areas - ranging from ocean monitoring to repairing infrastructure like oil and sewer pipes to maintaining solar arrays and wind turbines to cleaning up plastic pollution to harvesting fruits and vegetables.

Just as an example, 2019 saw the launch of saildrones on the ocean - autonomous drones that are propelled by the wind with sensors that are powered with solar panels. These 20 ft tall drones carrying over 200 pounds of instruments were developed by Saildrone Inc. and have been deployed by NOAA to autonomously collect data about the ocean - with the end goal of augmenting the existing fleet of research vehicles and data buoys. These drones can sail for months at a time, cover thousands of miles of ocean and constantly transmit the data to on-shore operators via satellite. Also, since each drone functions as an independent, agile, mobile platform, data can be collected for a range of environmental conditions, including ocean biogeochemistry (CO2 concentrations, O2 concentrations etc), water conditions (wave velocity, direction, water temperature etc.), ocean-atmospheric interactions, and animal behavior (fish surveys, fur seal foraging etc.).

So today, let’s talk about how the technology powering these drones is built!

Let’s start with one of the biggest challenges - navigation or getting the drone to move efficiently to a target position. How exactly is this done? Usually, the drone is given the target coordinates to move to and, if the surrounding conditions (also known as the background flow field) are known perfectly, an optimization algorithm can be used to plot the best path. These are known as the “path planning algorithms” and include graph search with a directed tree, mixed integer linear programming and swarm optimization among others.

The challenge in using these drones in the ocean or the atmosphere is that a) we don’t know the surrounding conditions perfectly b) these conditions can change rapidly and unpredictably and c) the drones themselves may change their surroundings simply because of the way they fly - as when multi-rotor drones fly near obstacles.

How can we overcome these challenges?

One approach is to look at the world around us, see if there are existing systems in nature that have solved this problem and figure out if we can mimic these natural solutions - a bio-inspired or biomimicry method. In this particular case, biomimicry has provided several cutting-edge solutions to the problem of drone navigation. To date, scientists have used approaches mimicking a school of fish swimming in the ocean, a swarm of bees navigating through the air and a hawk’s soaring technique respectively to successfully guide drones.

While these approaches have all worked, a central challenge that remains is how to enable these drones to self-correct successfully when their surroundings change unexpectedly.

The engineers at Caltech, ETH Zurich and Harvard came up with an interesting approach to solve this problem. They combined machine learning with biomimicry - in this case, mimicking zebrafish and seal behavior in monitoring the local environment and making small changes to their navigation route to reach their goal. The engineers used the idea of local environmental monitoring by adding sensors to the drone that monitored vorticity, flow velocity and pressure in a system and then used a machine learning algorithm (deep reinforcement learning) to find the best route in the most efficient manner.

They then tested this approach using a computer simulation - the idea here being that if the approach performed well in a simulated environment, then it would be worth building a prototype and then scaling it further.

The way the simulation was set up was a relatively controlled unsteady von Karman vortex street (a repeating pattern of swirling vortices) that was generated by simulating 2D, incompressible flow across a cylinder. The starting particles, representing the drones, were generated randomly so that different flow conditions were present at the starting point and the end point or target point was assigned past the cylinder. The reason behind the random generation was to make sure that the machine learning algorithm did not try and correlate the relative starting position with the background flow conditions - a situation that is not present in the real-world.

By the way, this is typical of why machine learning and data science in clean technology need expertise in the system - boundaries and conditions need to be set up so that the algorithm does not produce a result that violates natural conditions.

So what were the results?

When the simulation was run, the engineers found that if the starting particle had no way of sensing the local flow conditions, it reached the target 1% of the time on average as the swimming particles were swept away by the environment. This is similar to a swimmer being caught in a current in the ocean and trying to reach the shore. When just reinforcement learning was added - that is, the starting particle could also learn from previous attempts to reach the target and adjust the strategy accordingly - the particles reached the target 40% of the time. When biomimicry, in terms of adding local monitoring, was combined with reinforcement learning - the results were quite remarkable! If the starting particle was only capable of detecting vortices, similar to zebrafish, and was able to learn and adapt (through the reinforcement learning algorithm), it reached the target 47% of the time. If however, the starting particle was capable of sensing flow velocity (like hawks) and use reinforcement learning to adapt, it reached the target a startling 99% of the time.

Or, in other words, being able to sense local velocities and using the speed and direction to glide and make small changes in direction could get the drone to the target almost every single time.

Of course, this is a 2D computer simulation - and the results may change as we look at what happens to an actual drone in a 3D environment - but, it’s definitely a promising start to building a successful navigation system for the drone.

The next step would be building a prototype of this technology - a drone with sensors that can sense the local environment - and testing it in a controlled 3D environment like a water tank with simulated ocean currents. If that works, then the drone can be built and deployed at a pilot trial - maybe in a small estuary or bay. And then followed by larger trials - over a period of time in a designated area of the ocean. Finally, the technology is ready for large-scale commercial deployment.

And that’s how technology like the Saildrones is invented and finally gets used by companies and organizations around the world!

If you’re interested in learning more about how AI in used in clean technology, check out our workshops or sign up for our weekly newsletter.