Predicting floods using models for Covid and other infectious diseases

What do Covid-19, social networks, traffic congestion and floods have in common? On the face of it, the answer would seem to be - nothing. But interestingly enough, all these systems can be represented in similar fashions - as nodes and networks with spatial and temporal effects.

So, scientists and researchers often try to see if models and ideas developed to solve problems in fields with similar representations can be adapted to problems in other fields. A really interesting study was published in Nature a couple of months ago, where researchers explored what happened when they adapted models used in understanding how infectious diseases like Covid spread to predicting floods in cities and urban systems.

How have floods and their impacts typically been modeled and why do people care? To start with, people care about flooding because it directly impacts their lives - when do areas have to be evacuated, how long should the evacuation last, and what are potential health consequences from flooding as industrial chemicals and other dangerous substances are released into floodwaters and then to local streams and rivers. Scientists, policy makers, governments and non-profits have been working on building models of flooding that can be used to answer these questions with sufficient accuracy and in enough time to make decisions about evacuations and response time for several years. Typically, flood models have been physical or process based models, where the river or ocean systems and weather conditions are modeled using equations that govern the different processes occurring in these systems - for example, the duration of the hurricane, the speed and movement of water across a river basin or a city and the locations that are under threat.

The difficulty with building these physics-based hydrodynamic models is that a lot needs to be known about the system at hand - and those data are not always easily collected in many regions around the world. There have been improvements with smart sensors, mobile phones and other devices - but, in general, building these models has required significant skill and inputs.

One way that scientists have tried to overcome these limitations is by combining machine learning models with the physical models to get results that are faster and more accurate. Bayesian networks, genetic algorithms and neural networks have all been used and by doing this, the accuracy of model predictions has increased to 80% and more and results have been obtained much faster. However, these models also suffer from the limitations associated with the fact that machine learning models need large amounts of historical data - again, data that may not always be available.

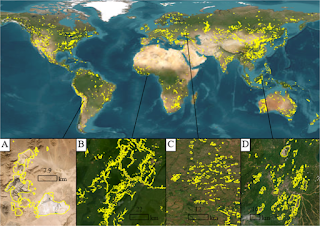

So, scientists tried an alternative approach. Since cities now house large populations and impacts to human health and livelihoods are significant in cities, it would appear that finding a better way to model flooding in cities would have an outsize impact. And, interestingly enough, roads and flood zones in cities can be represented as nodes and networks - with tertiary roads flooding only once the main highways or roads that are connected to them flood. This is very similar to the way in which infectious diseases spread among populations - and so, they tried building mathematical models for floods that were based off infectious disease models. In particular, they used the Susceptible - Infectious - Recovered (SIR) framework from epidemiology to create flood network models.

The way this was done was to create compartmentalized networks of spatially connected roads - where tertiary roads were connected to main roads that also form the bulk of where floodwaters move. Now, as the main roads flood (i.e they are infected), the tertiary roads get flooded (i.e. they are susceptible and then infected) and as the water recedes from the tertiary roads - they recover. The model can thus be used to simulate the spread of floodwaters and how fast an area can recover from the flooding.

This model was tested for the city of Houston and Harris County during Hurricane Harvey in 2017 and was found to increase the accuracy of the model predictions to 90% while obtaining the results faster and more efficiently.

This is just one technique that’s being deployed in the smart water sector - a sector that’s predicted to hit $22 billion in the United States in 5 years and probably be a trillion dollar market in the next 10-15 years. If you’re interested in understanding the technology, data sources and algorithms being deployed in the smart water sector, join us on Saturday, November 21st for a live, online workshop on “Data, Models and Machine Learning in the Water Sector”.