Coding, Databases, GIS and other tools for a clean tech data scientist

As we saw in the last post, a data scientist's role requires the ability to capture, process, analyze and visualize the data.

While there are some off the shelf software tools, most applications in the clean tech and data science space require knowledge of a programming language in order to perform many of the tasks effectively.

The popular choices for a clean tech data scientist are

1. Python: Python is probably the single most critical element in the data scientist’s toolkit. It’s a flexible, easily learnt computer language that is powerful because of the large stack of libraries that have been developed. Do you need to figure out how to get data from a website – or train a machine learning algorithm? The chances are that there is an existing library in Python that can be plugged into your code.

The main libraries that are necessary for any of the data science use cases are scipy, numpy, statsmodel and pandas. These can be used for building a predictive model using machine learning, processing satellite images, scraping information from multiple sensors and websites.

2. R: R is a powerful software that is especially useful for doing the statistical analysis of the data. While there are modules that can be used to build predictive models , or do machine learning – they are usually not as versatile as the ones that Python provides. R is an excellent tool for the initial, exploratory analysis of data and to do quick visualizations. For anyone who use MATLAB, R provides much of the same functionality – but without the license costs.

3. FORTRAN: FORTRAN is an older programming language, but it’s used extensively in existing environmental software. Even if you don’t program in FORTRAN, you should be able to read and understand the code underlying many environmental applications.

The second element is the choice of where data needs to be housed and the type of data that is generated.

4. Database Tools - PostgreSQL, PostGIS and MongoDB: If you have data, you need to store it and have it readily accessible. The most popular open source data base system for structured data these days is PostgreSQL. Structured data is data that can be divided into distinct columns that are always the same. An example would be data from an air pollution sensor – it could have a latitude, longitude and pollutant concentration value. PostgreSQL comes as the default on Apple’s MacOS, but is also easy to install on any Windows or UNIX/Linux machine.

PostGIS is an addon to PostgreSQL that almost every clean tech application will need – simply because it is the only way to deal with data that has a location tagged onto it. Its main use is in analyzing and dealing with location information in the database as easily as possible. For example, if you need to figure out how many people are in the vicinity of the sensor that measures air pollution, PostGIS will let you sort and analyze the data by location and distance.

Not all data these days can be structured into columns – much of what is generated by people is unstructured data. This includes things like web page information, social media data from Facebook posts and Tweets etc. This kind of data is best stored in what is called a NoSQL database – the most popular of these being the open source tool MongoDB.

It should be noted here that all three database systems can be easily accessed and manipulated using Python and Python’s powerful libraries. This is one reason why Python is preferred in data science circles – it allows the scientist to access the data and build the predictive model in the same program very easily.

5. Hadoop, MapReduce, Spark and Pig: These are the tools that are used to deal with genuinely big data – data in the Petabyte range - the kind of data that the Googles and Facebooks of the world have to work with. These systems allow for parallel storage and parallel processing of streams of data – they were first developed by Google, but are now extremely popular in many applications.

However, they are more complex and difficult to set up compared to the traditional database systems. It’s often easiest to prototype the analysis using the database tools in 3 and then build a Hadoop cluster if the data stream is really that large.

6. Tensor Flow, PyTorch and other machine learning libraries: Machine learning libraries are well developed in scipy and R libraries. These libraries are most useful during the prototyping stage and require significant tweaking before they can be used to analyze Petabyte scale data. Further, as deep learning has become more popular, the deep learning libraries PyTorch and Tensor Flow have become an essential addition to the data scientist’s toolkit. Tensor Flow, in particular, is a deep learning platform that was developed by Google and the recent versions that have been released allow data scientists to build deep learning algorithms relatively easily.

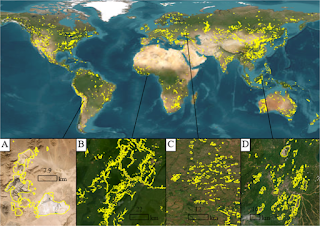

After storing, processing and analyzing the data, the final stage is in presenting the data in a format that can be understood easily by the layperson. Most cleantech data lends itself to being represented in maps because the data usually has a spatial component to it.

6. Geographic Information Systems (GIS): Most environmental and clean tech applications use GIS in some form or the other to visualize and integrate different data sources. The most popular is the commercially available ArcGIS, developed by ESRI. ArcGIS allows the user to develop polished systems without needing to know how to code - and supports a wide range of functions that are specifically targeted towards the cleantech sector.

The open source alternative QGIS is also popular among developers and is gaining traction among clean tech professionals as well. QGIS allows for integration with Python and has a number of modules – however, it does require time and some basic expertise in order to set up the system and create visualizations.

7. Google: Google Maps and Google Earth are powerful tools to present data in an interactive format. It’s always nice to be able to visualize data in real-time and Google’s tools allow the user to do that through their APIs. Additionally, Google Earth Engine is one of the most useful tools to obtain satellite data. The advantage of using these tools is that they are free and since they are based on Google’s powerful infrastructure, you can be sure of getting the data quickly and reliably.