Suitcases and pipes: Making machine learning work for clean water - Part I

When are machine learning and data science useful in the water sector? Are they useful if you have a large system with lots of data? Or are they useful if you’re looking at small-scale, decentralized systems?

The answer, as you might have guessed, is both. The difference is in the type of tools and algorithms being deployed and the results that are being sought - but, in both cases, machine learning and data science provide invaluable help in getting people access to clean, safe drinking water.

Let’s take a look at two very different applications of water tech. One is for a water utility that is attempting to improve its systems to ensure that clean, safe water continues to flow to the citizens of the city and the other is for a small, rural community that needs cheap, reliable access to clean water. Interestingly, while the goals and requirements of both these applications are very different, the thread that connects both of them is machine learning.

Today, we’ll talk about the challenges the water utility faced and how machine learning and data science were brought to bear on the problem. Next time, we’ll talk about the small, decentralized system - a portable desalination system in a suitcase!

Probably the most common challenge water and wastewater utilities face is how to manage their “invisible” assets - the pipes, connectors and valves that comprise the pipeline infrastructure. In many cities around the world, this infrastructure was built 50-100 years ago and are often buried underground. Figuring out which pipes are at risk, which ones need to be replaced, what kind of maintenance might be required and when to schedule it can be challenging. If repairs and maintenance are left for too long, there could be breakages or contamination problems and members of the community would no longer have access to clean water. However, if systems are replaced prematurely, the utility loses money. Also, if there are errors in choosing which parts of the system to replace - older, riskier pipes may still remain in place which would increase the risk to the community’s water.

So, how do we solve this problem?

Typically, utilities use historical data and knowledge about the pipeline network to determine which pipes are the oldest and hence contributing the most risk to the system, manually monitor those pipes, and then replace them either when a breakage looks imminent or on a specific schedule.

This is the kind of system where data science and machine learning can often make a huge difference - but how is it done?

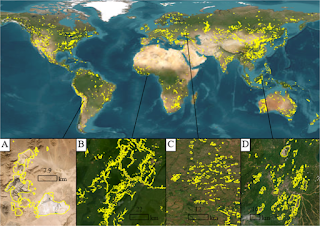

First question - what kinds of data are available that are relevant to solving the problem of managing utility infrastructure and risk? There’s historical data on pipeline locations, pipe size, materials and the time of installation; sensor data on water flow through and pressure in the pipes; and satellite data on ground conditions, weather, population dynamics, and buildings served by the pipelines.

Second question - how can all these data be integrated and models built to produce useful results? There are several approaches that can be taken depending on quickly the answer is needed and the level of accuracy that is sufficient to make a decision.

The first and perhaps the most straightforward approach is to use a standard statistical analysis on existing utility data. Here, we simply use the historical data about the pipelines to find clusters of similar pipelines using models such as k-means or k-nearest neighbor and then rank them by the probability of failure. The probability of failure can be calculated as a weighted combination of the different pipeline parameters (age, material, size, service time). If a risk model is not available, then a neural network could be used to determine the relationships between the parameters and the probability of failure determined in that manner. The model will still be reasonably accurate and the results can be obtained quickly.

This was the approach that was used by the City of Raleigh and Xylem in analyzing the state of the City’s water infrastructure. Raleigh has 2340 miles of pipelines, over 88,000 pipe segments some of which are over 100 years old and 600,000 customers. By performing a cluster analysis together with other machine learning techniques, they were able to identify the top 1% of at-risk pipeline segments in 16 clusters. This allowed them to reduce their planning and response time by 75% and better monitor the state of their infrastructure.

The second approach would be to combine the historical data with smart sensors. In this case, you would have a model that is continuously updating itself as sensor data comes in. We would still have a risk model as developed in the first approach - but it would also include a model that would detect anomalies in the sensors and incorporate that into the risk assessment. In this case, we could have a two-tier warning and assessment system. The warning system would flag areas of the network that need immediate attention or maintenance in the near term (days or weeks) based on the anomaly detection model for the smart sensors. The risk assessment system would include the areas that need replacement and the risk associated with them in the future (e.g. in six months to a year). This would be a more complex approach and would require more time to implement - but would also add more value.

The third approach would also incorporate process based pipeline models, soil models and water resources models under climate change scenarios. Here, we’re not just evaluating and predicting risk to the pipeline infrastructure under current conditions - we’re also analyzing and predicting the possibility of failure under different conditions. As an example, let’s say that the community is suffering from a drought as the climate changes - which then results in excess groundwater being pumped. This in turn causes the ground to subside. How then does that impact the integrity of the buried water pipelines? In such an situation, we’re looking at providing value in the future - and understanding what the risk to the utility and community is likely to be 10 or 20 years later.

As can be seen, having an understanding of the system and an ability to adapt and transform machine learning and data science techniques becomes invaluable!