Adapting AI for the Planet

The last couple of months have been interesting from a climate viewpoint - we’ve seen a record number of climate related disasters around the globe - drought, floods, fires, heat waves…..and it looks like this is probably going to be what our planet will look like in the near future. Add to that the COP26 conference that is scheduled for October 31st - and climate, sustainability and technology are front page news!

So, let’s talk about one of the technologies in the news - artificial intelligence (AI) and its impact on climate, water, agriculture, energy, forestry, ecosystems and other sectors in clean technology.

AI and its subset of tools - machine learning (ML), data science and statistics - are being touted as one of the key technologies in solving the problems facing the planet today. And while these technologies are certainly powerful - applying them effectively to solve problems in clean tech is another issue altogether.

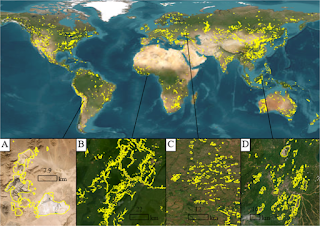

AI has been used by scientists in different clean tech sectors since the 1970s. In fact, some of the first classification algorithms that were used at scale were those developed by remote sensing scientists in the 1970s to analyze data from the Landsat satellites. These algorithms were first developed to identify land types from space - for example to answer questions like what’s a forest, what’s a grassland, how large is that body of water. These were then followed by harder classification problems - e.g. can we identify crop species in a field from space and map the extent of agricultural productivity? Solving these kinds of problems requires expertise in crops,plants and ecosystems in order to identify useful data and an ability to code and build algorithms that can scale. This is partly because it’s relatively easy to identify a forest or grassland or agricultural area from an image - but trying to figure out the combination of plants in a grassland is much tougher. How do you identify which field is growing wheat as opposed to rice or corn?

That problem is solved using a combination of neural networks, hyper spectral imagery, additional sensor data and verification of the algorithm’s results on the ground. Interestingly, the ground verification is probably the most critical component of building the model - the results from the verification feed into the model to teach the neural network if its predictions were accurate or not and that helps the model improve for the next season of collecting data. If you go back and look at the satellite crop data for the United States starting from the 1990s, you’ll see the steady improvement in how accurate the model was in predicting the major crops - it starts at around 60-70% accuracy and then improves to 95-99% depending on the crop.

This is a fairly typical example of AI in the clean technology sector - but what’s changed in the last few years is the sheer volume and variety of data now available. In fact, many agencies now have to process Terabytes of data every day in close to real time and at very high precision. And then we have high resolution satellite imagery, hyper spectral cameras with multiple spectra beyond the typical red-green-blue(RGB) spectra found in photographs and computer vision problems, drones and in-situ sensor data that are often collected at widely varying scales - we’re seeing a deluge of Earth systems data!

Additionally, Earth systems are dynamic and dependencies over space and time need to be accounted for (e.g. mapping cumulative rainfall or number of sunny days in a period to identify drought conditions). This makes it challenging for typical machine learning algorithms because machine learning algorithms focus on the data - which in turn can lead to errors because of assumptions leading to naive extrapolation, ignoring physical constraints and sampling or data bias. For example, trying to predict the spread of a wildfire by looking primarily at weather conditions (is it hot? are there lighting strikes in the region? is there a drought) and the fuel conditions (how much vegetation is there to burn? how dry is it?) will lead to errors as the extent to which the fire spreads will also depend on terrain, instantaneous wind speed, the direction of the wind at the time and how the vegetation are connected to each other.

This is where a combination of physical models, expertise in the subject and the mathematical and computational skills to build a useful model come into play. The scientist or engineer needs to be able to understand the system well enough to identify what data are useful, what parameters are most important, when the model’s results make sense and when they don’t, and how to code all that into a machine learning algorithm that can work with billions and billions of data points. Similar to what we saw in the healthcare sector last time - “we need the expertise to spot the flaws in the data and assumptions and the mathematical and computational skills to compensate for those flaws”.