Data Science for the Energy-Food-Water Nexus

One of the most interesting aspects of working in clean technology is the interaction between different sectors in the space - the Food-Energy-Water nexus, for example.

What is the food-energy-water nexus? Well, not being in the Star Trek universe or any other science fiction arena, we’re definitely not talking about black holes of energy where food and water go to die :)! What we are talking about when we talk about the nexus between different clean tech sectors is - how do these sectors interact with each other? How complex are the interactions and can the relationships between them be described? In the case of the energy-water-food nexus, we’re exploring the interactions between the energy, water and food sectors. For example, we need water to grow food and produce energy, but energy is also needed to pump out groundwater and to process food.

Just for fun, let’s take a look at some numbers on the food-energy-water nexus from the UN and FAO. Let’s start with the biggest one - agriculture. Agriculture uses approximately 70% of the world’s freshwater resources for crop production and close to 80% of global cropland is dependent on rainfall to grow crops. At the same time, food production and supply chain are responsible for approximately 30% of global energy consumption. However, when we turn to the energy sector, the numbers are no less daunting. Almost 75% of industrial water withdrawals are used for energy production - both conventional and renewable sources. And cooling power plants in developed countries in the US and Europe use between 40-50% of freshwater withdrawals. At the same time, treating water and wastewater to adequate standards, ensuring sufficient water is available through groundwater pumping or water transport all require energy.

As we see, each of these sectors are strongly dependent on each other and understanding the interactions and behaviors of these inter-related systems is extremely complex.

But that’s where data, models and machine learning really starts to make things interesting! The systems that we have are so complicated and inter-connected - and the questions that we need answered range from simpler ones like understanding the energy use for different parts of a water treatment plant to complicated ones like how increasing the acreage of almonds can impact water availability in California.

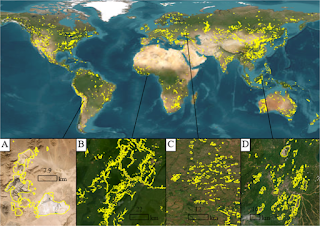

Earlier, scientists, companies and policy makers tried to use simplifying assumptions in order to be able to build and solve their models. However, today we can create a dizzying array of complicated, interacting models that use big data like satellite images, machine learning algorithms in combination with power plant models and all kinds of tools to generate and answer our questions about all these systems. And we can do that at scale - from answering the smaller, simpler questions about a single system to exploring the interactions between different systems - to working on “Digital Twins” of the Earth.